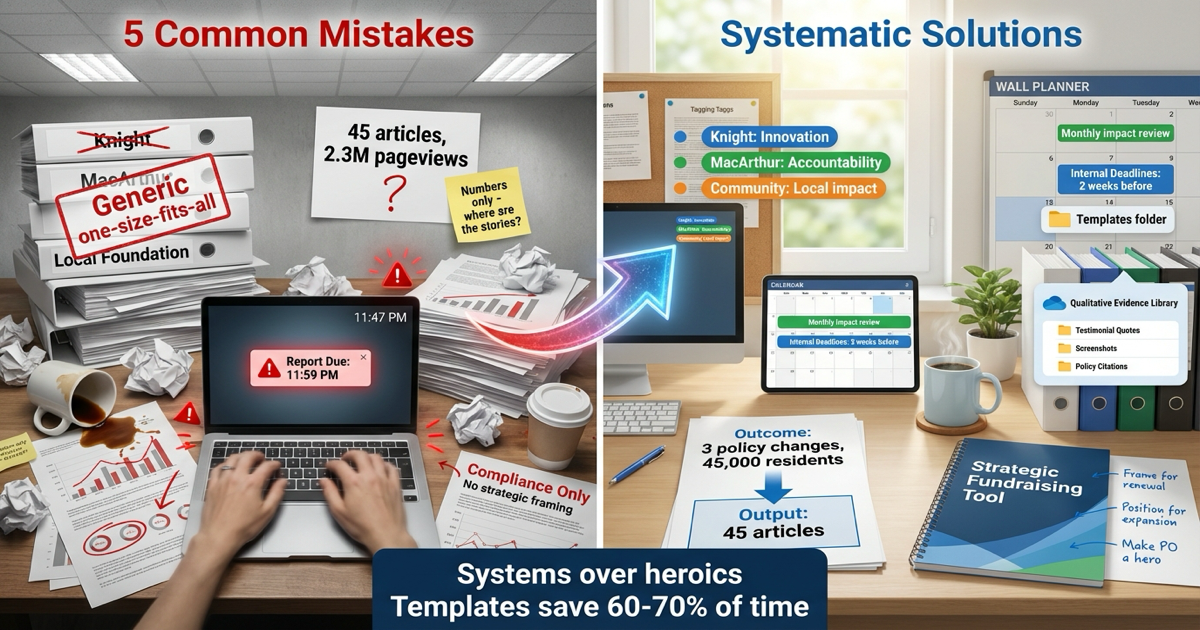

5 Common Grant Reporting Mistakes and How to Avoid Them

Grant reporting is one of the highest-stakes activities in nonprofit journalism. A strong report can lead to increased funding, multi-year commitments, and referrals to other funders. A weak report—even from an organization doing excellent work—can result in non-renewal, reduced funding, or damaged relationships that take years to repair.

The good news? Most reporting failures follow predictable patterns. By understanding these common mistakes and implementing systematic solutions, you can transform your reports from compliance documents into strategic assets.

1. Generic, One-Size-Fits-All Reports

The Mistake

You’re pressed for time. You have reports due to three different funders within two weeks. So you write one comprehensive report documenting all your work over the quarter, and you submit essentially the same document to all three funders—perhaps with minor customization like changing the salutation or swapping out the foundation name in a few places.

This is one of the most common and damaging mistakes in grant reporting.

Why It Fails So Completely

Funders are not a monolithic group. They are mission-driven organizations with highly specific strategic priorities, thematic interests, geographic focuses, and theories of change.

Consider these funders:

- Knight Foundation cares about innovation, sustainability, and information quality

- MacArthur Foundation prioritizes accountability journalism and systemic public interest impact

- A local community foundation wants to see neighborhood-specific benefits and local civic engagement

When you submit the same generic report to all three, you’re sending a clear message: “I don’t really understand or care about what matters to you specifically.”

The practical impact:

- Knight reads about your policy advocacy work and thinks, “This doesn’t align with our innovation focus”

- MacArthur sees extensive audience metrics and thinks, “They’re focused on reach, not accountability”

- The community foundation finds your statewide impact impressive but can’t find clear evidence of benefit to the specific zip codes they serve

Each funder concludes your work doesn’t align with their priorities—even though it actually does. They just can’t see it through the fog of irrelevant information.

The Fix: Hyper-Personalized Reporting

The solution requires two components:

1. Multi-dimensional tagging from the start

As you capture impact evidence throughout the grant period, tag each piece of evidence with multiple attributes:

- Funder/Grant: Which specific grants supported this work?

- Thematic Area: Social justice, education, health, government accountability, etc.

- Impact Type: Policy change, community mobilization, investigation launched, individual helped, etc.

- Geographic Focus: Specific neighborhoods, cities, regions

- Innovation Method: New technology, novel approach, experimental format (for innovation-focused funders)

2. Query-based report generation

When it’s time to write the Knight report, filter your database to show only:

- Work funded by Knight

- Innovation-focused initiatives

- Sustainability progress

- Community engagement metrics

For MacArthur, filter for:

- Accountability journalism

- Policy impact

- Public interest outcomes

- Systemic change

The result: Each funder receives a report that speaks directly and exclusively to their mission, using their language, highlighting the outcomes they care most about. Every example feels relevant. Every metric matters to them specifically.

The message this sends: “We deeply understand your mission, and we’re precisely aligned with your priorities.”

2. Output-Focused Metrics: Confusing Activity with Impact

The Mistake

Your report leads with:

- “Published 45 articles”

- “Reached 2.3 million pageviews”

- “Posted 312 social media updates”

- “Gained 8,500 new followers”

These are outputs—evidence of activity, not evidence of change.

Why It Fails

Ten years ago, funders accepted audience metrics as proxies for impact. Those days are over. The journalism funding community has moved decisively from outputs to outcomes. They want evidence of real-world change: policy reforms, investigations launched, communities mobilized, individual lives tangibly improved.

When you lead with outputs, funders hear: “We’re busy and popular, but we can’t prove we’re making a difference.”

The hard truth: In a competitive funding environment, outputs without outcomes suggest your journalism is generating engagement but not generating change. And funders don’t fund engagement—they fund change.

The Fix: Lead with Outcomes, Support with Context

Completely restructure how you present your work:

Instead of:

“We published 45 articles on housing policy, reaching 2.3 million readers.”

Write:

“Our housing accountability investigation led to three concrete policy changes affecting 45,000 residents:

- The city council voted 8-1 to create a $5 million affordable housing trust fund

- The state housing authority launched an investigation into landlord practices we exposed

- Two major property management companies changed their eviction policies

This impact resulted from 45 deeply reported articles published over six months, which collectively reached 2.3 million readers and were cited in official proceedings 23 times.”

The structure:

- Lead with the outcome (policy change)

- Provide specific, verifiable details

- Show the scale of impact (45,000 residents)

- Then provide output context (45 articles, 2.3M reach)

The outputs now serve their proper role: supporting evidence that explains how the outcome was achieved. The outcome is the headline.

Practical Examples by Impact Type

Policy Change:

- Law passed or amended (cite legislation)

- Regulation modified (cite official documents)

- Budget allocated or reallocated (cite budget documents)

Investigation Launched:

- Government investigation opened (cite official announcements)

- Inspector General report initiated (cite IG documentation)

- Law enforcement action taken (cite public records)

Community Mobilization:

- Residents attended town hall (cite event records)

- Petition signatures gathered (document count)

- Community organization formed (document formation)

Individual Lives Changed:

- Person received benefits they were denied (testimonial + documentation)

- Family avoided foreclosure (testimonial + outcome)

- Student changed educational path (testimonial + evidence)

Each outcome requires evidence. Outcomes without evidence are just claims. Evidence makes them credible and powerful.

3. Last-Minute Scrambles: The Crisis-Mode Reporting Cycle

The Mistake

Every quarter, the same pattern repeats:

- Week 10: Development Director realizes report is due in two weeks

- Week 11: Frantic emails to program staff requesting data

- Week 12, Day 1-5: Staff scrambles to remember what happened months ago

- Week 12, Day 6: Late-night report writing with incomplete information

- Week 12, Day 7: Submission at 11:47 PM, riddled with errors and gaps

This reactive, crisis-mode approach treats each report as a standalone emergency project.

Why It Fails

Immediate problems:

- Incomplete data (impact that occurred months ago is forgotten)

- Errors and inconsistencies (no time for quality review)

- Exhausted, resentful team members

- Mediocre reports that don’t showcase your best work

Strategic problems:

- Time pressure prevents thoughtful narrative development

- No opportunity to craft compelling stories

- Missed chances to position work for renewal or expansion

- Damaged internal relationships between development and program teams

The hidden cost: This pattern makes reporting feel like punishment, ensuring your team will resist implementing better systems. Negative association with reporting means less buy-in for solutions.

The Fix: Operationalize the Reporting Cycle

Transform reporting from an event into a system with four components:

1. Shared Grant Management Calendar

Create a centralized calendar (Google Calendar, Outlook, or grant management software) that includes:

- All report due dates

- Internal deadlines (2-3 weeks before external deadlines)

- Quarterly impact review meetings

- Mid-year check-ins with funders

- Grant renewal windows

Make this visible to entire development and program teams. No surprises.

2. Clear Role Division

Program staff responsibility:

- Capture impact evidence in real-time using simple forms

- Attend monthly impact review meetings

- Provide context and interpretation of outcomes

- Respond to specific questions during report drafting

Development staff responsibility:

- Set up easy capture systems (forms, Slack channels, etc.)

- Organize and analyze collected evidence

- Draft reports and narratives

- Manage funder relationships and submission process

Critical principle: Program staff capture raw evidence; development staff transform it into funder-appropriate narratives. No one does both.

3. Standardized Templates

Develop internal templates for common report types:

- Quarterly foundation reports

- Annual impact summaries

- Project completion reports

- Federal grant compliance reports

Templates should include:

- Standard sections and prompts

- Required data points

- Character/word count guidelines

- Examples of strong vs. weak responses

Templates save 60-70% of formatting and structural decision-making time.

4. Regular Impact Reviews

Hold monthly 30-minute meetings to review:

- Impact captured in the past month

- Upcoming reporting deadlines

- Strong stories emerging for future reports

- Gaps in evidence collection

This habit means you’re always report-ready. When a deadline approaches, you’re compiling existing evidence, not scrambling to create it.

4. Ignoring the Qualitative Data: The Numbers-Only Trap

The Mistake

Your impact tracking focuses exclusively on what’s easily quantifiable:

- Website analytics

- Audience demographics

- Article counts

- Social media metrics

Meanwhile, your most powerful evidence goes uncaptured:

- The community member who testified at a city council meeting after reading your series

- The official who cited your investigation in a policy memo

- The teacher who used your education reporting in her classroom

- The nonprofit director who launched a program inspired by your coverage

This narrative-based, qualitative impact disappears because you have no system to capture it.

Why It Fails

The most compelling and persuasive evidence of journalism’s impact is almost never quantitative. Funders remember stories, not statistics.

What moves funders:

- The direct quote from a parent whose child received special education services after your investigation revealed systemic failures

- The scanned policy memo showing a legislator explicitly citing your reporting

- The video testimonial from a community leader explaining how your coverage catalyzed neighborhood action

What doesn’t move funders:

- A table showing 47,392 pageviews

Quantitative data provides scope and credibility. Qualitative data provides emotional resonance and memorability. Reports need both.

The Fix: Systems for Qualitative Evidence Capture

1. Create low-friction capture methods

Dedicated Slack or email channel:

- #impact-evidence where any team member can share:

- Screenshots of social media reactions

- Forwarded emails from community members

- Links to other media citing your work

- Notes from conversations about impact

Post-publication check-in form:

- 3-5 simple questions reporters answer 2-4 weeks after major story publication:

- Has anyone reached out about this story?

- Have you heard about any responses from officials or institutions?

- Have you seen this story referenced elsewhere?

- Any unexpected reactions or outcomes?

Monthly community scan:

- Assign one person to spend 30 minutes searching:

- Government meeting minutes for citations

- Social media for substantive discussions

- Other media for references to your work

- Academic or advocacy papers citing your reporting

2. Structure and tag qualitative data

When you capture qualitative evidence, immediately tag it:

- Type (testimonial, citation, policy reference, community response)

- Source (community member, official, peer media, academic)

- Story/project connected to

- Funder/grant relevant to

- Date captured

This makes qualitative evidence searchable and usable, not buried in email.

3. Create a quotes and testimonials library

Maintain a running document of powerful quotes organized by:

- Impact theme (accountability, community benefit, individual change)

- Funder relevance

- Project/coverage area

When report time comes, you have a menu of pre-approved, powerful testimonials ready to deploy.

5. Missing the Strategic Opportunity: Reports as Compliance vs. Fundraising

The Mistake

You view grant reports as backward-looking compliance documents that satisfy contractual obligations. You write to inform funders what happened, check the reporting box, and move on.

You miss that reports are actually your most powerful fundraising tool.

Why It Fails

Funders make decisions about future investment based substantially on past performance. Your report is not just documentation—it’s your case for renewal, expansion, and increased support.

What you’re missing:

- The opportunity to strengthen the relationship through excellent stewardship demonstration

- The chance to position successful work as deserving expansion

- The moment to align your proven successes with the funder’s evolving priorities

- The platform to make program officers enthusiastic advocates for your work internally

When you treat reports as mere compliance, funders treat you as merely compliant. You get renewed at the same level or not at all.

The Fix: Data-Driven Narratives That Persuade

Transform reports from informational to persuasive by building narratives that:

1. Frame impact as aligned with the funder’s theory of change

Don’t just report what you did. Show how what you did advances the specific change the funder seeks.

For Knight Foundation:

“Your investment enabled us to experiment with three innovative distribution approaches. The most successful—hyper-local text message alerts for breaking accountability news—increased engagement among low-information communities by 340%. This demonstrates a scalable model for reaching underserved audiences that we’d like to expand with continued support.”

For MacArthur Foundation:

“This grant advanced your strategy of supporting accountability journalism that drives systemic reform. Our investigation didn’t just inform the public—it directly catalyzed two pieces of legislation now moving through committee, and triggered a state-level investigation that remains ongoing. We’ve identified three additional accountability gaps that follow the same pattern and would benefit from similar sustained investigative coverage.”

2. Position successful work as deserving expansion

Don’t just report success—make the case for scaling it:

“This pilot project in three neighborhoods proved that embedded community journalists can identify accountability issues weeks before they surface publicly. Early intervention prevented two potential scandals and built trust with community organizations. We believe this model should expand to cover five additional neighborhoods experiencing similar governance challenges. Based on our demonstrated $40,000 per-neighborhood cost and documented impact, expanding this program would require $200,000 in additional annual support.”

3. Make the program officer a hero

Remember: program officers need to justify their decisions to their leadership. Make that easy:

“Thanks to your vision in supporting this work, we’ve achieved three policy changes affecting 45,000 residents—a per-person impact cost of approximately $1.11. This ROI compares favorably to other civic interventions, and the systemic nature of policy change means the impact compounds annually. You’ve enabled work that will benefit this community for years to come.”

Give them the evidence and framing they can use internally to advocate for continued support.

4. End with clear next steps

Never end a report with just “thank you.” End with a path forward:

“Based on this year’s success, we’d welcome the opportunity to discuss expanding this coverage model to adjacent communities. We’ve drafted a preliminary proposal for three additional reporters focusing on education accountability, which aligns with your foundation’s increased emphasis on education equity. Would you be open to a conversation about this expansion opportunity?”

This positions you as a proactive partner with vision, not a passive grantee waiting for direction.

The Common Thread: Systems Over Heroics

Notice the pattern across all five mistakes? The fix is never “work harder” or “care more.” The fix is always systematic:

- Better capture systems eliminate scrambling

- Tagging systems enable personalization

- Templates and processes reduce stress

- Regular reviews surface strong stories

- Strategic framing transforms reports into fundraising tools

Organizations trapped in crisis-mode reporting don’t fail because they lack talent or dedication. They fail because they lack systems.

The ones that succeed treat reporting as a core operational capability deserving systematic investment—and they reap the rewards through stronger funder relationships, higher renewal rates, and increased support over time.